To date in this mini-series, we’ve looked at the importance of listening and engaging with your business, and why an innovation process is vital. This time, we’re going to consider two other vital components of the trial: setting objectives, and measuring results.

If you’ve gone to all the effort of scoping, building and delivering a trial, you’d want to know how it performed, right? A trial in itself is not a measure of success, so you need to know if it has been successful in solving a business challenge, or not.

A few years back, I visited a business who had installed wayfinding kiosks in their public spaces. They were huge screen displays angled towards the user with touchscreen capability, and looked very impressive with animated maps, an ability to text navigation links to smartphones and other user accessibility features. Clearly a great deal of thought had gone into the user experience, particularly in terms of adoption. Many of the kiosks had been installed directly after the automatic doors in the building entrances to deliberately disrupt foot traffic into using the kiosks.

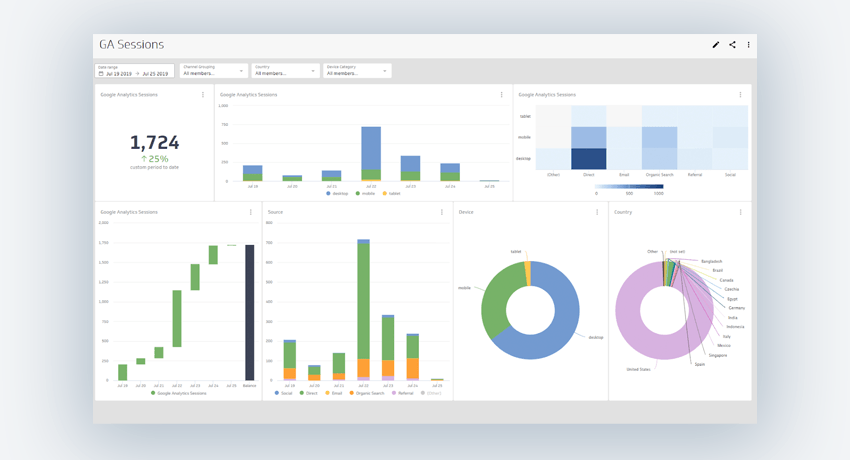

We asked lots of questions. One of them was around analytics: “what are the usage stats showing you?”, thinking that beyond qualitative observations of public usage, you’d also pick up some quantative data too. It’s one of the easiest levers to pull, given how easy it is to collect this data within the software.

The answer was a surprise: “The analytics package hasn’t gone in yet. Will be done for the next release.” Which meant that, outside the times when you can stand and observe people using the device, you have no knowledge of if the kiosks are being used at all, let alone what tasks or navigation routes those users are taking. Thus, the team behind what was undoubtedly some excellent work would have absolutely no idea if it was a success.

Your trial needs to be designed with measurement – and analytics – in mind from the start. Here, you should talk to your business challenge owner. They will know what the threshold for success is. So if they tell you that in order to succeed and secure a business case, you need, for example, 90% of customers positive about a solution, then that’s the level. It also serves as an instruction that, as part of the trial, we need to survey customers, so the trial design should accommodate this.

Next, we need consider that there are different objective types; empirical evidence and insight. The former is the indisputable facts from the trial, analytics being a good example. If your solution performed the way that it should, you need evidence to record that fact. Insight is something different. This is what you learnt along the way, including things that you may not have expected.

I worked on a trial of a new screen display in which our business owner required that 5% of passing foot traffic could recall seeing it. No problem; after the installation, we got a customer survey team together, positioned them after the display and they canvassed folks walking by. When asked, our results indicated that 7% of passers-by recalled seeing the display – just beating the target set – which, outwardly, would indicate that the trial was a success. But the insight gleaned from the overnight installation beforehand, where the displays were secured partly with duct tape, came as a surprise. The quality of this approach was as much of an issue as scaling any potential outturn solution, and the trial didn’t continue.

Perhaps the best analogy for this process is when you come to test drive a new car. In advance, you’ll have done some research and, even if it’s just subconsciously, you’ll have some objectives. How does the car drive, is there enough space, how easy is it to park, what’s the visibility like, is it noisy and so on. You’ll (hopefully) have researched some of the running costs in advance too. Yet on the day, you’ll discover lots of other things – the insights – that you wouldn’t have planned for. Maybe how feature-rich the in car entertainment is, how the parking sensors work and how spacious it is.

So the trial is a blend of the empirical evidence and the insight. To have neither budgeted for your trial means you’ve really failed before you’ve begun. Know what your success needs to look like to know how your trial has performed.

In the last instalment, we’ll look at what comes after the trial, and how you can scale to delivery.